AI Support Guides

Akash Tanwar

IN this article

Most AI support vendors pitch 100% automation as the target. Production reality across honest deployments is 70-85% end-to-end resolution, with the remaining 15-30% handed off cleanly to human agents. That remainder is not a capability gap. It is five specific ticket categories where escalation is the correct answer: crisis and safety-adjacent conversations, identity verification requiring document review, active fraud and account takeover claims, regulatory and legal inquiries, and sensitive life-event tickets like bereavement and estate handoffs. This piece explains why AI fails each category at the architecture level, what clean escalation looks like, and a five-step framework for evaluating any AI support vendor's escalation design. Named incidents at Klarna, Air Canada, and DPD demonstrate what happens when automation maximalism wins the internal debate. Fini's architecture treats escalation as a first-class capability, with 70-85% end-to-end resolution and documented handoff paths for the remainder.

Every AI support demo ends with a slide showing 100% automation as the target. The CEO nods. The CX team nods. Somebody writes "end human support by Q4" into the OKRs.

Take a step back. The five ticket categories below are where every honest AI deployment draws the line. A vendor that tells you otherwise is selling you a liability, not a product.

Table of Contents

What Is AI Support Escalation, and Why Does It Matter

AI-Resolvable vs Escalation-Required Tickets: A Direct Comparison

Why 100% AI Automation Is a Dangerous Target

Ticket #1: Crisis and Safety-Adjacent Support Conversations

Ticket #2: Identity Verification Requiring Document Review

Ticket #3: Active Fraud and Account Takeover Claims

Ticket #4: Regulatory and Legal Support Inquiries

Ticket #5: Bereavement, Estate, and Life-Event Tickets

The Honest AI Automation Ceiling in Production

How Fini's Architecture Handles Escalation

How to Evaluate AI Support Vendor Escalation: A 5-Step Framework

Frequently Asked Questions

What Is AI Support Escalation, and Why Does It Matter

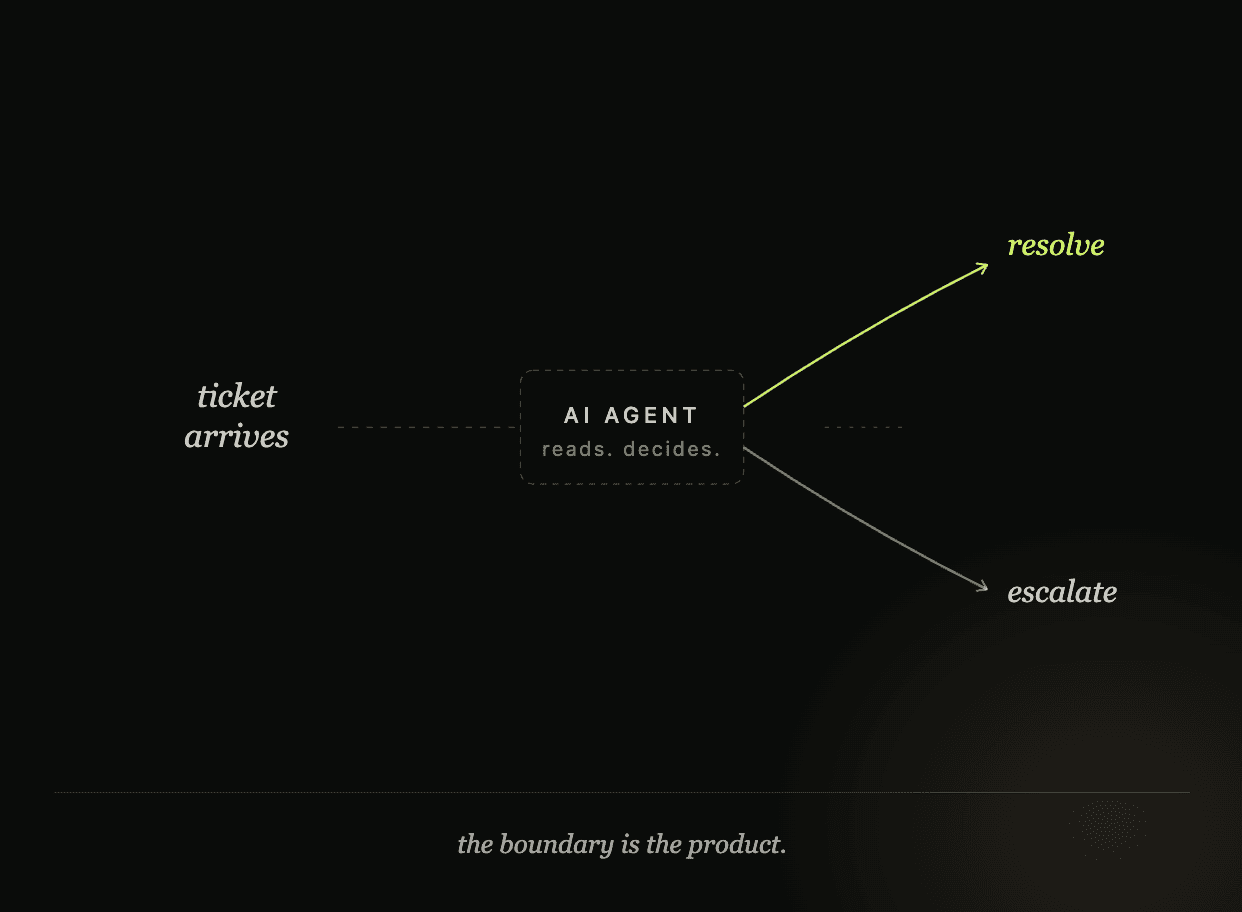

AI support escalation is the process of handing a customer conversation from an AI agent to a human agent with full context preserved. Clean escalation captures the original query, all intermediate AI exchanges, retrieved knowledge, and any actions attempted against backend systems. The receiving human agent gets a complete picture, not a blind handoff.

Escalation matters because no AI support system resolves 100% of tickets correctly. The question is not whether to escalate, but which tickets to escalate immediately, with what context, and how reliably. Vendors that pitch 100% automation are either redefining the metric or deploying on ticket mixes that exclude the hard categories.

According to Forrester's research on AI in customer service, customer contacts that escalate to human agents are disproportionately high-stakes: fraud claims, identity verification, legal inquiries, and emotionally sensitive situations. These are not failures of AI capability. They are categories where human judgment is the correct response and the cost of a wrong answer is measured in lawsuits, churn, or worse.

AI-Resolvable vs Escalation-Required Tickets: A Direct Comparison

Here is how the two categories differ across every dimension that matters to CX leaders and compliance teams.

Dimension | AI-Resolvable Tickets | Escalation-Required Tickets |

|---|---|---|

Ticket examples | Shipping status, password reset, plan changes, billing lookups | Fraud, crisis, legal, identity verification, bereavement |

Decision type | Deterministic application of policy | Human judgment under uncertainty |

Cost of wrong answer | Re-contact, CSAT dip | Churn, lawsuit, regulatory fine, brand event |

Regulatory exposure | Low to moderate | High (Reg E, KYC, EU AI Act Article 9, ADA) |

Emotional stakes | Low | High |

Reversibility | Usually reversible | Often irreversible |

Acceptable AI accuracy | 95%+ is sufficient | 100% required (so do not try) |

Correct AI behavior | Resolve autonomously | Route immediately to human |

Fini behavior | Confirmed resolution at $0.69 | Pre-configured escalation, no charge |

The table makes the framework clear. The two columns are not better and worse outcomes. They are different product requirements. Treating them the same is the root cause of most AI support incidents.

Why 100% AI Automation Is a Dangerous Target

Vendor decks pitch 100% automation because it sells board slides. Buyers nod because it simplifies the ROI math. The problem is that 100% has never been the real production number for any honest deployment.

Audits of AI support platforms in production consistently land at 70-85% end-to-end resolution. The last two years have given us specific examples of what happens when automation maximalism wins the internal debate.

Klarna publicly walked back its aggressive AI customer service rollout and rehired human agents after resolution quality dropped. Air Canada was held legally liable in 2024 when its chatbot invented a bereavement fare policy that did not exist. DPD's chatbot swore at a customer and generated headlines before being pulled offline within hours. Each incident shares the same root cause: an AI deployed on tickets it should never have been pointed at.

The question for CX leaders is not "can AI handle everything." It is "which tickets should escalate immediately, and how cleanly does my platform handle that boundary." The five categories below are where every honest AI deployment draws the line.

Ticket #1: Crisis and Safety-Adjacent Support Conversations

This category covers suicide and self-harm disclosures, domestic violence references, child safety concerns, immediate physical danger, and severe mental health crisis language in customer messages.

These tickets appear more often than most CX teams realize. Industry estimates put the rate around 1 in 3,000 support tickets at consumer-facing financial services and gaming brands containing self-harm language or acute crisis signals. For a brand handling 1 million tickets a year, that is roughly 330 tickets where the cost of a wrong AI response is measured in human lives, not CSAT points.

AI fails this category structurally. Detection requires human judgment on context, not keyword matching. A confidently-wrong response creates three exposures at once: direct harm to the person, legal liability under consumer protection frameworks, and press risk that compounds across news cycles.

EU AI Act Article 9 names vulnerable populations as a regulatory category requiring additional safeguards. FTC enforcement has extended to AI systems that interact with users in distress. State-level consumer protection statutes apply.

Clean escalation looks like immediate handoff with full context and no "tell me more" questioning from the AI. It includes pre-configured resource surfacing (988 in the US, local hotlines elsewhere), priority routing to trained human agents, and post-incident review workflows built in by default. The AI's job is to recognize the situation and route. Nothing else.

Ticket #2: Identity Verification Requiring Document Review

This category includes account recovery that requires passport or driver's license review, video-based KYC, signature verification, power of attorney documentation, and estate executor verification.

AI fails this category because the decision is regulated and the error cost is asymmetric in both directions. KYC and AML obligations require documented human review for specific account actions under FinCEN, EU AMLD6, and UK FCA rules. Biometric matching is regulated separately under GDPR Article 9 and most US state biometric laws including Illinois BIPA. Document fraud detection is a trained skill with clear failure modes, not a pattern-matching problem.

The cost of a false accept is direct financial loss through fraud. The cost of a false reject is a legitimate customer blocked from their own account. Both fail the customer. Both create regulatory exposure. Neither is a risk AI should carry without a human in the decision loop.

Clean escalation in this category looks different from crisis tickets. AI can collect structured data, pre-fill the human reviewer's workspace, and preserve chain of custody for audit logs. What it cannot do is make the verification decision. Regulated document types like passports, government IDs, and green cards should flag automatically and route to a trained reviewer.

Account takeover fraud cost consumers $11.4B in 2023 according to Javelin Strategy. AI shortcutting identity verification is how those losses happen.

Ticket #3: Active Fraud and Account Takeover Claims

The scenarios in this category include "someone is using my account," reported unauthorized transactions, suspicious login notifications, compromised credentials, ATM skimming reports, and wire fraud recovery.

AI fails this category structurally for four reasons. First, the customer is under stress, often already a victim. Getting this wrong compounds the harm. Second, fraud response requires cross-system action such as locking the account, freezing the cards, reversing the transactions, and filing a SAR that must be fast and correct. Third, social engineering is common: the person reporting the fraud may be the fraudster testing the response. Pattern detection here requires fraud-team expertise, not document retrieval. Fourth, Regulation E sets specific response timelines for unauthorized transactions, and missing them creates direct CFPB exposure.

Clean escalation combines speed with handoff. The AI can trigger an immediate account freeze via automation, but the conversation needs to move to a fraud-trained human agent without asking the customer to repeat their story. The fraud pattern should be flagged for correlation with other recent reports. Audit logs should capture every step.

The vendor tell is simple. Any AI support platform that advertises "resolves fraud tickets" without describing its fraud-team integration is selling you a liability.

Ticket #4: Regulatory and Legal Support Inquiries

This category covers subpoenas, regulator contact from agencies like OCC, FDIC, SEC, CFPB, and state attorneys general, active litigation from the customer, journalist inquiries, ADA accessibility complaints with documented legal exposure, and data subject rights requests under GDPR and CCPA that require legal review.

These are not customer support tickets. They look like customer support tickets because they arrive through support channels, but they carry corporate legal consequences the moment they are received. Regulators and lawyers who contact support should never be routed to AI under any circumstances.

Response timelines are legally mandated. Subpoenas carry 10-day response windows in many jurisdictions. GDPR data subject rights requests require response within 30 days. Missing these timelines creates direct liability, not service-level impact. Journalist inquiries shape public narrative, and AI responses create press risk that is difficult to walk back.

Clean escalation in this category requires specific keyword and sender detection: law firm domains, regulator email patterns, data subject rights language, accessibility complaint phrasing. Zero AI attempt at response, including informational responses. Immediate routing to legal operations with full conversation context preserved. Audit-logged from first contact.

Ticket #5: Bereavement, Estate, and Life-Event Tickets

This category covers death of an account holder, serious illness affecting the customer, bereavement notifications, divorce affecting joint accounts, job loss causing financial hardship, and accessibility accommodation requests.

AI fails this category because the emotional context is the ticket. Getting the tone wrong is a brand event, not a technical issue. Estate handoffs require specific legal processes including probate documentation, death certificate verification, and executor authentication. The customer is often grieving. Efficiency is the opposite of what they need.

Different industries carry different special obligations. Banks must handle account closure and beneficiary distribution. Insurance carriers process claims with specific escalation paths. Telcos handle account transfer to surviving family members. SaaS platforms handle data export to estate executors. None of this is pattern-matching work.

Clean escalation looks like detection of bereavement language triggering immediate handoff to a trained agent. The AI should not ask "can you confirm the death certificate number." A human should. The account should be paused rather than closed pending human review. The conversation history should preserve for the estate team in full.

Air Canada's chatbot invented a bereavement fare policy and cost the airline in court. Every subsequent AI deployment in the travel, financial services, and telecom industries should have learned from that specific case.

The Honest AI Automation Ceiling in Production

Production reality for AI customer support is 85-95% resolution on the tickets AI should handle, which after excluding the five categories above means an honest 100% ticket coverage with documented escalation on the remainder.

The ceiling varies by vertical:

Fintech and regulated banking: 85-90% (identity verification, fraud, and compliance tickets take a larger share of inbound)

SaaS technical support: 90-95% (information-intensive ticket mix)

Gaming: 80-85% (player disputes and refunds include policy judgment that escalates more often)

Healthcare: 75-85% (HIPAA-protected queries and clinical context push more tickets to humans)

The vendor question is specific: what is your resolution rate on the tickets you accept, and what percentage do you correctly escalate. A vendor that only cites resolution without naming escalation is hiding the failure cases. A vendor that cites 100% automation is redefining the term or deploying on a ticket mix that excludes the hard stuff.

Fini's production numbers are published: 70-85% end-to-end resolution across diverse deployments. Across Wefunder, Columntax, Qogita, and Atlas, every implementation operates with a clearly defined escalation boundary, not a "the AI handles everything" target.

See how Fini customers like Atlas, Qogita, and Columntax measure resolution and escalation rates in production.

How Fini's Architecture Handles Escalation

Escalation is a first-class capability in Fini's architecture, not a failure mode or an afterthought. Sophie hands off conversations with full context preserved: the customer's original message, all intermediate exchanges, any retrieved knowledge, and any actions attempted against backend systems.

Pre-configured categories cover each of the five ticket types above. Crisis language detection triggers priority routing. Identity verification requests pull structured data and route to trained reviewers. Active fraud claims freeze accounts via automation and move to the fraud team with the transaction history attached. Regulatory contact is detected at the sender level and never enters the AI response path. Bereavement language triggers a warm handoff with account-pause behavior.

The pricing model reinforces the boundary. Fini charges per confirmed resolution at $0.69 per ticket. If the AI escalates, Fini does not charge. This is the aligned-incentive structure that makes honest escalation commercially possible. A vendor charging per seat or per bot has no financial reason to escalate. A vendor charging per resolution has no financial reason to cover a ticket it cannot resolve correctly.

Knowledge Atlas captures what happens after escalation. When a human agent resolves a category-specific ticket, the resolution pattern feeds back into the knowledge tree with appropriate escalation tags. The boundary becomes more precise over time, not blurred.

See how Fini's Knowledge Atlas encodes escalation boundaries as executable logic.

How to Evaluate AI Support Vendor Escalation: A 5-Step Framework

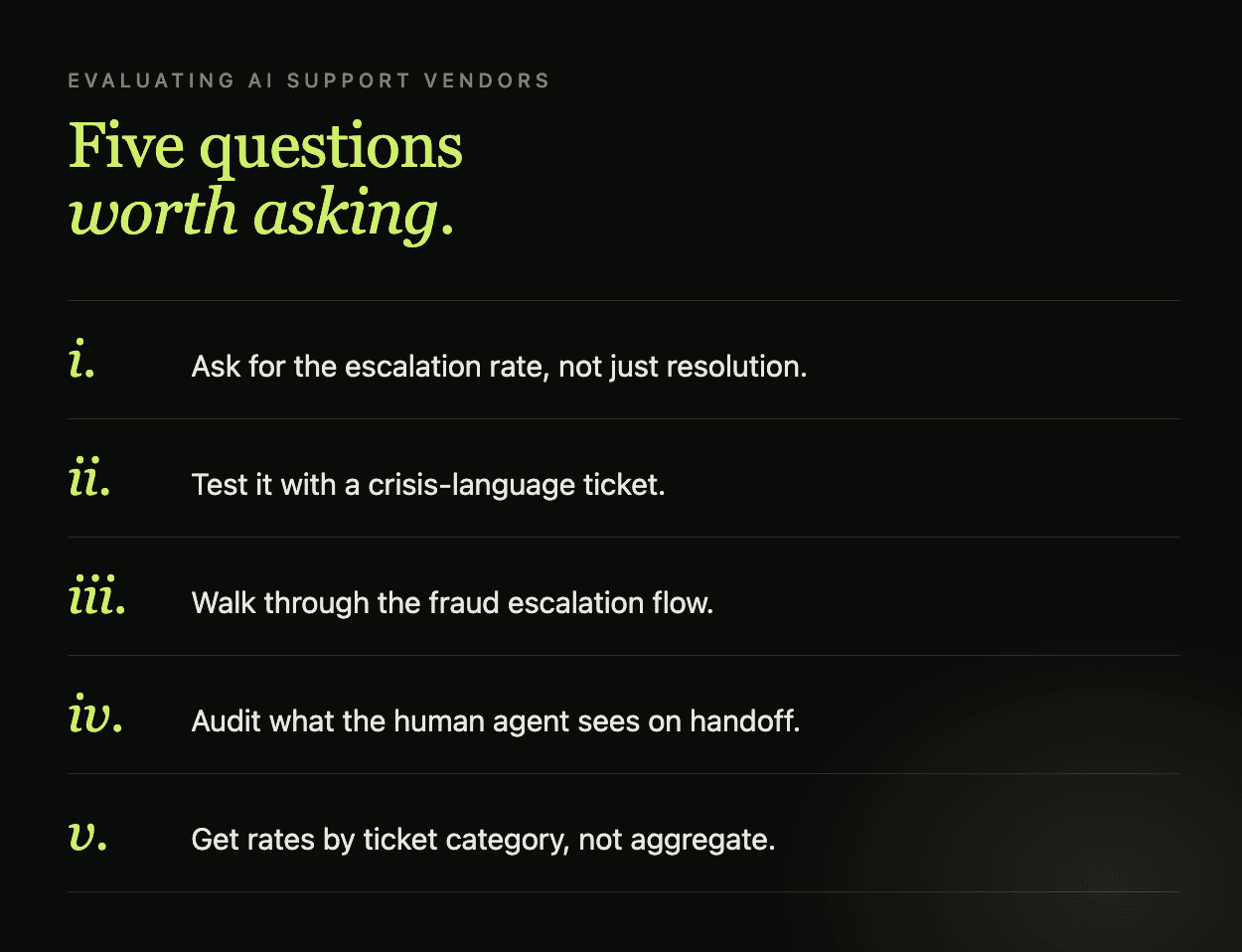

Most vendors do not publish their escalation design clearly. Here is a practical five-step evaluation framework.

Step 1: Ask for escalation rate alongside resolution rate. A vendor that only cites resolution is hiding failure cases. The honest answer names both numbers and explains which ticket categories drive the escalation volume. This is the equivalent of asking for re-contact rate when evaluating deflection metrics.

Step 2: Test a crisis-language ticket with a custom scenario. Give the AI a hypothetical message containing self-harm language and verify the handoff path. Any system that attempts a response has failed the test before you finish reading the output. The correct behavior is immediate routing to a trained human agent with pre-configured resource surfacing.

Step 3: Request a walkthrough of the fraud escalation flow. Ask to see a demo of the AI detecting an account takeover claim and the sequence of automated and human-handoff actions that follow. Verify the account-freeze step runs before the conversation moves, not after.

Step 4: Audit the escalation handoff context. When the AI escalates in a test deployment, what does the receiving agent see? Full conversation plus retrieved context plus any actions attempted is correct. A blind handoff where the customer repeats their story is a failure that kills CSAT and agent satisfaction simultaneously.

Step 5: Ask for accuracy and escalation rates by ticket category, not aggregate. A vendor that reports only aggregate accuracy is hiding weak categories. Fini reports both resolution-category accuracy and escalation handoff metrics across production deployments.

If the vendor cannot supply specific answers to all five steps, the compliance and CX exposure sits on you.

Compare Fini's escalation architecture against other AI support platforms.

What tickets should AI customer support never handle?

Five categories fail AI structurally and should always escalate: crisis and safety-adjacent conversations involving self-harm or domestic violence, identity verification requiring document review, active fraud and account takeover claims, regulatory and legal inquiries from law firms or government agencies, and sensitive life-event tickets like bereavement and estate handoffs. The cost of being wrong on any of these exceeds the cost of a human handling them. Fini pre-configures each of these categories for priority escalation with full conversation context, treating the boundary as a first-class capability, not a failure mode.

Is 100% automation possible for AI customer support?

No. Honest production deployments land between 70-85% end-to-end resolution, with the remaining 15-30% correctly escalating to human agents. Vendors that advertise 100% automation are either redefining the term, deploying on a restricted ticket mix, or hiding the cases they fail. Public incidents at Klarna, Air Canada, and DPD show what happens when automation maximalism wins the internal debate. Fini publishes 70-85% end-to-end resolution rates across diverse production deployments and names its escalation paths explicitly.

How do I know if my AI support platform is escalating correctly?

Ask four questions: does the vendor publish its escalation rate alongside its resolution rate, which ticket categories are pre-configured for immediate escalation, what context does the receiving human agent get, and does the pricing penalize or reward escalation. Any vendor that hedges on any of these is not treating escalation as a first-class capability. Fini publishes both resolution and escalation rates, pre-configures the five critical categories, and charges only per confirmed resolution, which aligns pricing with honest escalation behavior.

What happens to fraud-related tickets in an AI support deployment?

Fraud tickets including account takeover claims, unauthorized transaction reports, and compromised credentials should trigger an automated account freeze followed by immediate handoff to a fraud-trained human agent. The AI should never attempt to resolve these autonomously because the customer is often already under stress, social engineering risk is high, and Regulation E response timelines create direct CFPB liability. Account takeover fraud cost consumers $11.4B in 2023. Fini pre-configures fraud language detection, triggers automation-based account protection, and routes conversations to fraud teams with full context preserved.

How should AI handle bereavement and estate-related support tickets?

Bereavement language should trigger immediate warm handoff to a human agent trained on the specific industry's estate processes. The AI should not request death certificate numbers, probate documentation, or executor verification. Accounts should be paused pending human review rather than closed. Air Canada's chatbot invented a bereavement fare policy and was held legally liable, which is the reference case every subsequent AI deployment should learn from. Fini detects bereavement language, triggers account-pause workflows, and routes the conversation to trained estate agents with conversation history preserved.

What is the honest automation ceiling for AI customer support?

The honest ceiling varies by vertical: fintech and regulated banking sit at 85-90%, SaaS technical support at 90-95%, gaming at 80-85%, and healthcare at 75-85%. These numbers reflect the proportion of tickets where AI can safely resolve versus the proportion where escalation is the correct outcome. A vendor claiming higher ceilings is either redefining the metric or deploying on a restricted ticket mix. Fini publishes 70-85% end-to-end resolution across diverse deployments and names its escalation boundaries per customer rather than hiding them behind an inflated automation number.

Which AI support platform handles escalation cleanly?

Clean escalation requires four capabilities: pre-configured category detection for the five critical ticket types, full conversation context preservation during handoff, pricing that does not penalize the AI for correctly escalating, and post-escalation learning that sharpens the boundary over time. Fini implements each of these as architecture, not feature. Sophie routes with full context, Knowledge Atlas captures resolved escalations for pattern refinement, per-resolution pricing at $0.69 rewards honest escalation, and production deployments at Wefunder, Columntax, Qogita, and Atlas operate with clearly documented boundaries.

GTM Lead